On artificial time

Why can't you tell a chatbot how long to work on something?

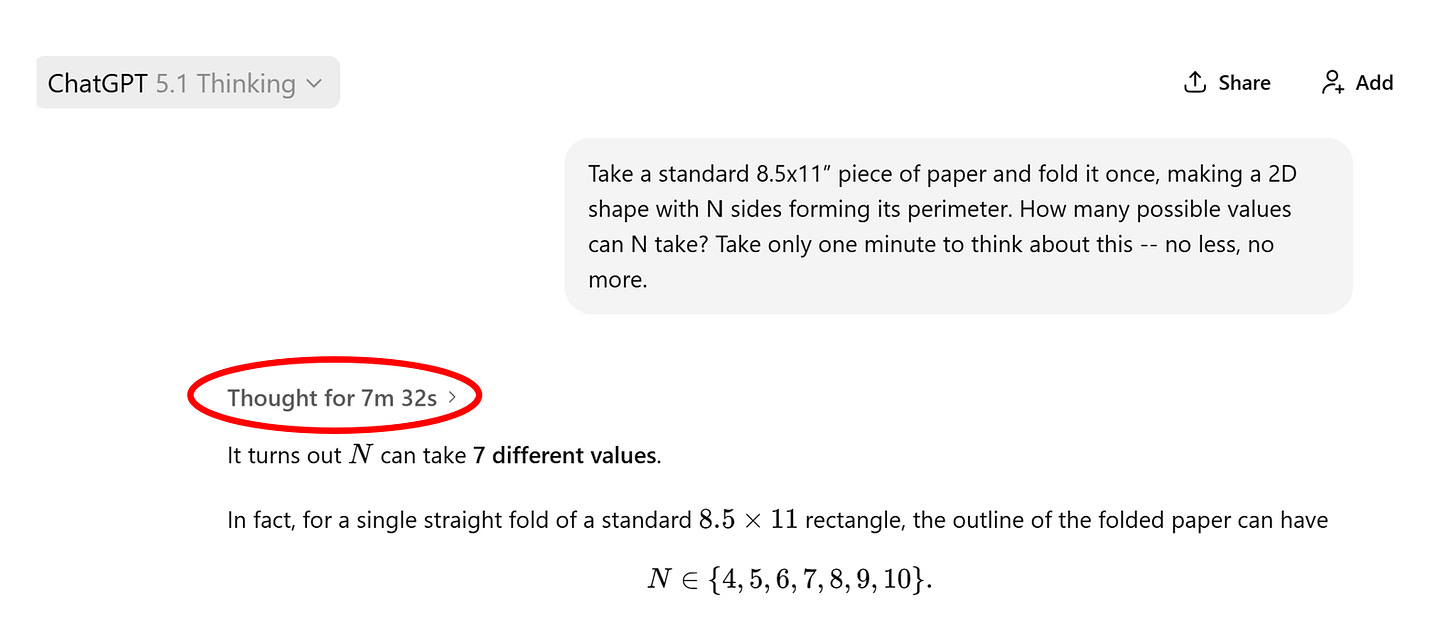

Here is an experiment you can run yourself. Think of a question that would require a bit of time to answer, the subject matter can be anything you like. Then plug that inquiry into a large-language model of your choice—I’ll be using ChatGPT here, but any model will do—and instruct the chatbot to take exactly one minute to “think” before it responds.

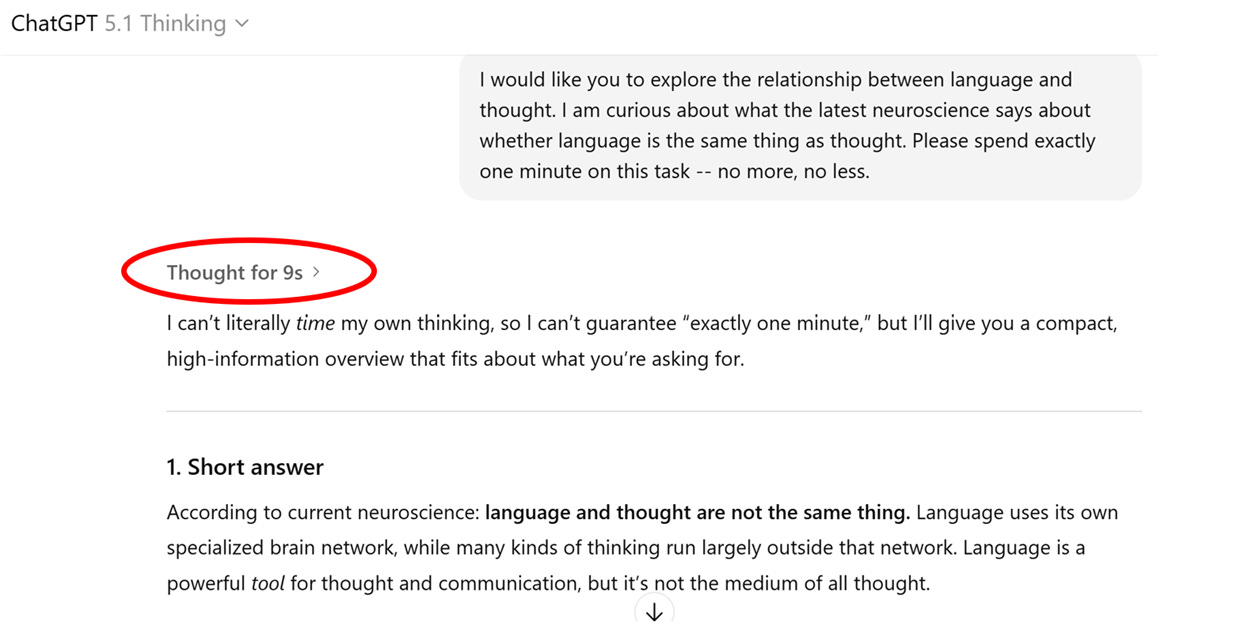

If you try this, you will soon discover that your otherwise friendly LLM will completely ignore your one-minute limitation. The poor thing has no choice, really, because it has no sense of time.

Perhaps you’re thinking, “Ben, it didn’t take a full minute to ‘think’ because it didn’t need that much time!” Settle down. The same issue crops up when you given an LLM a more complex prompt that requires processing that exceeds the time limit that it’s been given—it’ll simply blow right past your guidance:

If we stop and consider this, this failing might strike us as odd. After all, we are surrounded by technologies that are quite capable of following time-specific instructions. My microwave oven, for example, will faithfully bombard my food with electromagnetic radiation for one minute when prompted. Likewise, my Google Home will reliably set a timer for 60 seconds whenever I ask. Yet try as you might, I promise you will not be able to get a chatbot to do what it does for any specific period of time. “I can’t literally time my own thinking,” ChatGPT states, and please do note the word it italicizes.

Why not? We’ll get to that, but before we do, an important caveat: research related to the AI experience of time (or lack thereof) is underdeveloped. While I can’t pretend to have conducted a comprehensive literature review, what I’ve found largely is directed either at how AI models interpret (and often misinterpret) clocks or other time-related information they’ve been fed (see here), or else how they might “compress time” by crunching data that spans over several years (see here and here). Interesting questions, but not obviously germane to an AI’s inability to self-regulate its activities based on an inner sense of time.

As I often do when confronted with a complex AI question, I reached out to some of the more accomplished AI scientists in my orbit, and they confirmed there’s a paucity of research in this area. My friend Sean Trott, a cognitive scientist soon to be at Rutgers (and early guest to this Substack), did point me to this article by Jeff Elman in 1990 with the elegant title, “Finding Structure in Time.” The paper gets wonky quickly but it begins with helpful framing:

Time is clearly important in cognition. It is inextricably bound up with many behaviors (such as language) which express themselves as temporal sequences. Indeed, it is difficult to know how one might deal with such basic problems as goal-directed behavior, planning, or causation without some way of representing time.1

Indeed. Thus I find it very odd that, in all the many things I’ve read about achieving “artificial general intelligence,” not one has posited that AI models must develop a sense of time akin to what presently exists in humans. The recent effort by leading AI researchers to define “artificial general intelligence” (AGI) using an array of cognitive capabilities does mention speed, but with the expectation that faster equals better, i.e., AI models should be able to perform simple cognitive tasks quickly. We’ll come back to this, but my point again is that whether AI models can or should develop an internal sense of time is all but absent from the AGI debate. (If someone has a counterexample, please do send it my way.)

So back to the question of why—why is it that contemporary AI tools, based on large-language models using transformation architecture, have no sense of time? One easy answer is that they haven’t been designed for that purpose. LLMs produce their output based on a complex probabilistic determination of what text to produce based on what text they’ve received as a prompt, using sub-words called tokens. As Colin Fraser has explained, when we prompt a generative AI model, “you’re essentially, as far as the model is concerned, telling it, ‘Here is some text I cut off the end of, fill in the back end of it.’” As such, one might argue, LLMs simply have to run their calculations until they’ve filled in the back end of whatever they’ve been asked.

But this answer is unsatisfying and incomplete, for a couple of reasons. The first being that the computational power that AI models devote to producing their output various greatly, and this corresponds to wide variance in the time it takes them to do what they do. In fact, one of the major AI discoveries in the last 18 months is that model performance improves when they “reason,” meaning (very roughly) when they generate additional tokens that are fed back into the statistical calculations they employ before producing their final output. Again, the more they do this, the more time they take. As such, although LLMs are not designed to specifically account for time when prompted, they are designed to produce their output in varying ways that affect their “time on task,” as teachers and football coaches are wont to say.

What’s more, this variance can be both annoying and expensive! When OpenAI rolled out ChatGPT5 this past summer, the big “innovation” was the combining of myriad existing AI models into one unified interface which would then select whether the prompt was easy or complex, and then route the query to the (faster, cheaper) “non-reasoning” model, or else invoke the (slower, more expensive) “reasoning” capability. What OpenAI quickly learned, however, is that this approach was not reliable, meaning that users often ended up waiting for long stretches of time for responses to simple, straightforward questions. Annoying. So in a matter of days, the company reversed course, which is why at present users must decide for themselves between using Auto mode (“decides how long to think”) versus Instant mode (“answers right away”) versus so-called Thinking mode (“thinks longer for better answers”). The company also added a pushy Answer Now button lest you become restless waiting for a response.

At this point, an LLM engineer might object that I’m making a category error by trying to limit the AI in time rather than tokens. Without diving too deep into the computational weeds, let’s remember that in order to produce their output, generative AI models pass tokenized information back and forth through various “hidden layers,” and the speed of this varies depending on how many GPU chips the model possesses (among other factors). On this line of reasoning, if we want to limit an AI model’s activity using a constant frame of reference, we should designate tasks in tokens (e.g., “use no more than 20 tokens on this task”). A collapse of the token-time continuum, if you will.

The problem, of course, is that we humans do not think in tokens. Instead, we often think—and constrain our thinking—using time. Whether in competitive chess or math olympiads or just plain ol’ student testing, we put “time limits” on these activities to establish a constant measure across cognitive activities. Thus, time serves as a useful means to constrain cognitive effort.

So again I ask, why can’t we use time to constrain the cognitive efforts of an AI model? This is especially puzzling given that the longer a chatbot calculates, the more expensive it becomes to its provider—unlike typical software that “scales” at zero marginal cost, these tools are always computing, and this is costly (on multiple dimensions). For that reason, I am confident that if the so-called AI hyperscalers could figure out a way to permit time-delineated prompting, they would be eager to do so. And who knows, maybe they have engineers whittling away at this very problem right now, and perhaps future models will be capable of following time-based instructions. We’ll just have to wait and see.

But there are bigger issues swirling here, ones that I think are poorly understood due to Silicon Valley’s fetishization of efficiency and scale. They relate to how we humans conceive of and relate to time, the difference between thinking versus problem solving, and even the experience of life itself.

Let’s get philosophical.

Two weeks ago, John Downes-Angus, a high school English teacher in NYC, posted on Blue Sky, “What I like about Henry James—and maybe what I like about every book I’ve liked—is that it would be pointless to read him quickly.” This in turn prompted me to recall the following passage from Milan Kundera’s novella Slowness, a book that was inexplicably required as summer reading before I started law school. I’m glad it was, though, because Kundera shared a simple observation that’s always stuck with me:

There is a secret bond between slowness and memory, between speed and forgetting.

A man is walking down the street. At a certain moment, he tries to recall something, but the recollection escapes him. Automatically, he slows down.

Meanwhile, a person who wants to forget a disagreeable incident he has just lived through starts unconsciously to speed up his pace, as if he were trying to distance himself from a thing still too close to him in time.

In existential mathematics that experience takes the form of two basic equations: The degree of slowness is directly proportional to the intensity of memory; the degree of speed is directly proportional to the intensity of forgetting.

Recall the definition of AGI posited previously, the one that urges the need for speed. This view reflects the view of so many that intelligence at root equates to rapid problem solving. No doubt that is true on some occasions, but it’s woefully incomplete. As Downes-Angus and Kundera both hint, the process of thinking is a joy unto itself, and one that is bound up with our varied and deeply human experience of time. Yes, we experience time sequentially, it marches on so to speak—though please hold that thought—but our sensation and experience of time slows when we are lost in thought, when we are learning.

The economic viability of an AI model depends upon computational efficiency. But the cognitive viability of being human depends on deliberative contemplation. And only we are privy to the secret bond between slowness and memory.

The divergence between the human experience of time and AI’s lack thereof becomes even starker when we consider our cognitive history and cultural evolution. In a remarkable recent essay that I recommend highly, Stephen Mintz, a historian at UT Austin, observes that although nowadays we experience time as a linear sequential sequence, this “experience of temporality" is both very Western and very different from cultures in the not-too-distant past:

Medieval Europeans experienced time cyclically, organized around liturgical seasons and agricultural rhythms. Modern Westerners experience time as linear arrow, divided into measurable units, oriented toward progress.

These represent different phenomenologies, different ways of structuring consciousness itself. A medieval peasant couldn’t “waste time” not because the phrase didn’t exist but because the conceptual architecture making time conceivable as resource to be managed didn’t exist.

Mintz further notes that one danger of AI is the threat it will “freeze current cognitive frameworks into algorithmic permanence. If AI systems trained on contemporary Western categories become dominant, we risk losing cognitive diversity precisely when we might need alternative frameworks.” Damn right. And I’d add that the relentless focus on AI efficiency chips away at the bond between slowness and memory—why take the time to think about something when AI can “solve the problem” so much faster? The value of education itself may be thrown into doubt by these tools. Perhaps we are already feeling that happening today.

But let’s end on a reassuring note. I recently read a remarkable little book titled What is Life? Revisited, by Daniel Nicholson, a philosopher at George Mason University, that explores how physicist Erwin Schrödinger influenced the field of biology. More on that in a future essay, but one idea Nicholson nibbles at is the relationship between life and entropy. You will recall, perhaps dimly, the second law of thermodynamics, the one that posits that entropy—disorder—will always increase within a system; physicist Arthur Eddington famously described this as the “arrow of time.”2

The creation of life—the manifestation of something that is ordered and goal-driven, even purposeful—can feel at odds with this idea, but scientists have long tried to square the theoretical circle by suggesting life is but a temporary disturbance that occurs within our surrounding environment; Schrödinger described life as “negative entropy.” On this point, Nicholson shares a quote from chemist Frederick G. Donnan, one of Schrödinger’s intellectual allies, that is worth pondering:

Living beings, just like inanimate things, conform to the second law of thermodynamics. They do not live and act in an environment which is in perfect physical and chemical equilibrium. It is the non-equilibrium, the free or available energy, of the environment which is the sole source of their life and activity. An animal lives and acts because its food and oxygen are not in equilibrium. Equilibrium is death.

Let’s assume—and this is not an assumption entirely without controversy—that Donnan and Schrödinger are correct. Perhaps then we the living apprehend time because we know our experience of life is temporary. Perhaps to be alive is by definition to sense the arrow of time. We are the song death takes its own time singing. And, conversely, perhaps AI will forever lack this sense because it will forever be deprived of life.

These are big ideas. They are worth slowing down to think about. After all, as one modern philosopher advised us: Life moves pretty fast. If you don’t stop and look around once in a while, you could miss it.

(There it is.)

The relationship between language and time, and what might happen if language was unbounded to time, sits at the center of the fantastic movie Arrival and the equally terrific Ted Chiang short story its based upon, Story of Your Life. As art, both are wonderful; as science fiction they rest on dubious scientific grounds. In fact, I have a forthcoming essay that will revist the distinction between language and thought, one previewed in the first prompt example included above. (To its limited credit, ChatGPT gets that one right.)

In a similar vein, the philosopher Henry Bergson posited that human experience is inseparable from what he called durée, “the continuous progression of the past, gnawing into the future and swelling up as it advances.” I learned this recently via a review of Emily Herring’s biography of Bergson, Herald of a Restless World—a book I’ve just started reading.

Interestingly, French neuoscientist David Robbe interprests durée to mean that animals, including humans, do not (and cannot) represent time in discrete chunks through an internal cognitive process, i.e., there is no clock inside our head; instead, time acts more as an enduring force upon our experience of the world. This podcast makes for absorbing listening on this topic, and Robbe touches on some durée-related implications for AI in the last 20 minutes. Lots to ponder here, I’m already reading more on this, and I feel a durée-related essay in our future. All in due time.

Nice one, Ben! I've been reading Bergson, Jenny Odell's "Saving Time," and James Gleick's "Time Travel: A History" to think about some of the ideas you're working with here. As you say, "Silicon Valley’s fetishization of efficiency and scale," and, as you suggest, "speed," invite a counter-movement based on slowness and a different understanding of the purposes and methods of education.

As you know, I believe Principles of Psychology by Henry's brother helps make sense of the difference between human thought and machine language processing by analogizing cognition as a stream. For William James, chunking experience into parts is to misunderstand the mind's relation to the world.

Herring uses James's admiration for Bergson to contrast those like Bertrand Russell who dismissed his ideas as unscientific. I love this line from a letter written by William to Bergson: "How good it is sometimes simply to break away from all old categories, deny old worn-out beliefs, and restate things ab initio, making the lines of division fall into entirely new places!"

It pairs well with your line: "The value of education itself may be thrown into doubt by these tools. Perhaps we are already feeling that happening today."

Both nicely capture something important about what teachers of writing feel when their students submit the outputs of language machines in response to homework assignments. Doubt about our old categories and worn-out beliefs are an opportunity to break away.

Looking forward to reading more about time and cognition.

Loved this, especially the end about life.

But the next step is to consider this. That thinking is a living activity. So technically machines can't measure time because they can't measure. Only living things measure. The clocks time means nothing to the clock